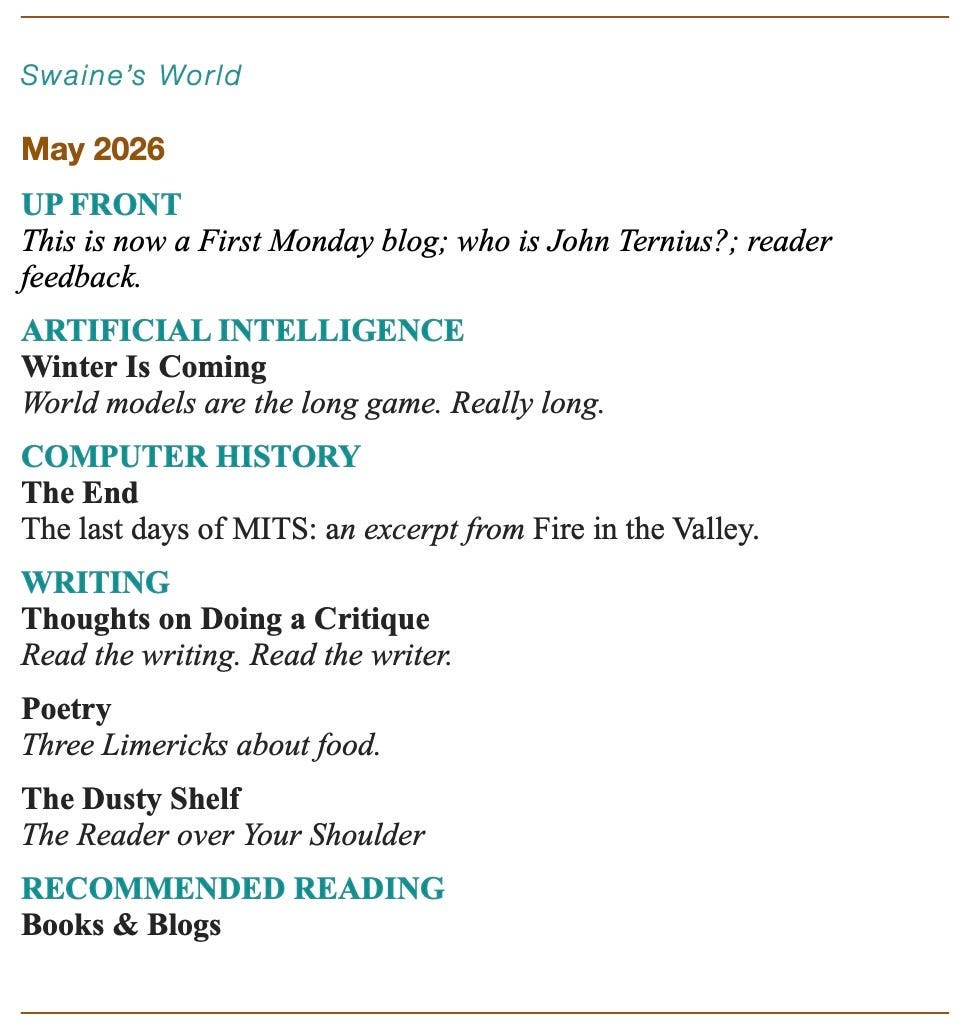

Winter Is Coming

World models for AI, Apple’s new CEO, critiquing Hemingway, Limericks about food.

Up Front

This is now a First Monday blog. I’d been posting more or less weekly for years, and sometimes the posts were less useful or interesting than I’d like. I am now posting monthly, and I hope the posts will be more substantial. There are other reasons for changing the frequency: I subscribe to more blogs than I can read, and I suspect that’s true for you as well. A few less nagging entries in the inbox may be a welcome change. Also, monthly publication is something that has been natural to me since I created my first magazine when I was twelve: it’s magazine mode. And I’m now an octogenarian, so I don’t want to work so hard.

I first thought I’d release each issue (OK, post) on the first of the month, but the experts say that the day of the week is an important factor in blog posting, so I’m shooting for First Monday: the first Monday of each month. But if I miss it by a day or two once in a while, it’s because I’m an octogenarian.

But where did the political stuff go? Facebook. I’m now posting all my political writing on my Facebook page. It’s about focusing the blog better, not because I’m shy about my political views. And I have some: I came of age politically in the Sixties. Did I mention that I’m an octogenarian?

Who Is John Ternius?

On September 1, John Ternius will become the new CEO of Apple. It thus becomes my obligation, if I want to live up to my tech news junkie rep, to wonder publicly who this Ternius guy is, and where he will take Apple.

He’s not really a mystery: He’s been with Apple for a quarter of a century, and he’s known as a popular executive, a hardware guy, and a product guy. But he’s not Tim Cook, and he will surely bring his own thinking to running Apple.

The $599 MacBook Neo is one clue that he will break some Apple rules, in this case the premium price or fat profit margin rule. The Neo is proving to be a surprising success in its own right. Engadget calls it “a design risk that ultimately paid off.” Will it cut into sales for Apple’s more expensive models? Will it impact Apple’s overall profit margin? That kind of calculation is not my specialty: I’m only good at math if there are no currency symbols involved. But it is clearly, for Apple, a bold move.

I’d argue that the foldable iPhone, which he will be personally introducing in September, also breaks a rule: what I claim, without support, was a Steve Jobs rule about unnecessary complication, meaning a feature that you don’t need and that could break. Anyway that seems like a Steve Jobs rule. Doesn’t mean the product won’t be a hit, though. After all, foldable phones are nothing new — except for Apple.

Here’s the brief bio, per Wikipedia. Two details intrigue me: “For his senior project, he developed a mechanical feeding arm operable by individuals with quadriplegia using head movements.” And: “Ternus began his career as a mechanical engineer designing virtual reality headsets at Virtual Research Systems.” Techcrunch thinks Apple might deliver a tabletop robot that sounds a lot like a descendant of that senior project.

And maybe Apple will finally get its AI act together. Steven Levy thinks so. If so, it will be done Apple’s way: tightly integrated into the Apple ecosystem and sold as enhanced capabilities of Apple products. Because Apple designs and sells products, and Ternius is a product guy.

But to me the most intriguing take is Tim Bajarin’s prediction that Ternius will lead Apple into an augmented reality future. If that truly comes to pass in the next decade, it will be fun to watch.

Reader Feedback

Old friend Jim Bonang wrote me a long email suggesting what I should focus on in the blog. He’s bullish on AI coverage. “It’s the story of the century, rivaling the Internet and World-wide Web for impact. Your readers are very nervous and looking for your insights on where AI is taking the software industry.” And he thinks computer history never gets old. In fact, “Computer history is now ‘a thing ’— Vintage Computer Fairs have popped up around the country. There’s plenty of nostalgia out there. People love it.” He recommends a bunch of computer history sites. “This site preserves OS versions since System 1 in emulation. (It’s pretty cool and covers NeXTSTEP too.)” I may share some those and other sites in future installments of my Computer History section. Jim’s not big on poetry, but I’m afraid I’m going to keep posting the stuff. And he’d like me to make the issues of PragPubmagazine available. I’m thinking about it.

Artificial Intelligence

Winter Is Coming

Artificial intelligence is where the money is (going) right now. (And the power and the water.) And world models are AI’s next frontier. Don’t take my word for it.

In InfoWorld, Yash Mehta, a journalist who writes about IoT, data management and AI, posits that world models are the next big paradigm shift in AI. World models don’t just operate within a corpus of words (tokens) but tap into the physical environment. And that’s what is required for realartificial intelligence, he claims:

“To truly achieve artificial general intelligence (AGI), world models will need to go beyond pattern recognition to capture how the world actually works. A system capable of general reasoning must understand relationships — physical, social and causal — well enough to transfer knowledge between unfamiliar situations.”

To show how serious his claim is, Mehta quotes Yann LeCun, former chief AI scientist at Meta: “within three to five years, [world models] will be the dominant model for AI architectures, and nobody in their right mind would use LLMs of the type that we have today.”

LeCun recently quit Meta to pursue world models. He’s raised a billion dollars in backing. So did another AI pioneer, Fei-Fei Li. She jumped in even before LeCun.

(Li Fei-Fei is the Godmother of AI. She is a Stanford professor of computer science and has worked in artificial intelligence, machine learning, deep learning, computer vision, and cognitive neuroscience. She was the director of the Stanford Artificial Intelligence Laboratory from 2013 to 2018. She also served as Chief Scientist of AI/ML at Google Cloud. Oh, and she established ImageNet, the huge visual database crucial for research in visual object recognition. Her book, The Worlds I See: Curiosity, Exploration, and Discovery at the Dawn of AI, is one of Barack Obama’s recommended books on AI.)

So just what are world models?

World models train not on massive bodies of text (for example, my book Fire in the Valley, which Anthropic sucked up to train its models and for which it now owes me money, but I digress) but on something more abstract: rules, patterns, basically, how things work. This could mean how objects behave in physical space, the sort of knowledge a robot needs to pour a cup of coffee. In that case you’re talking about teaching the software the relevant scientific laws. Or it could mean business or law or medicine or any domain where rules and patterns can be identified.

As the limitations of LLMs are becoming apparent, world models seem nicely suited to take AI to the next level.

But there’s a problem.

Boom and Bust

A little history:

Artificial intelligence as a field wasn’t created in November 2022 with the release of ChatGPT by OpenAI. AI has a long history, dating back to 1956 and the Dartmouth Summer Research Project on Artificial Intelligence, where John McCarthy coined the term and launched the field. And that 70-year history is a cycle of boom and bust, hype and disillusionment. Periods of AI frenzy and investment led to unfulfilled promises culminating in periods of reduced funding, interest, and research. This has happened twice: in the late 1970s and from the late 80s to the mid 90s. These “AI winters” were “caused by overpromised capabilities, limited computing power, and commercial failure,” says Wikipedia, and the namer was appropriate because investment and research and development were effectively frozen.

There were successes in those earlier boom periods. An approach called Expert Systems sought to collect the knowledge of human experts in limited, vertical domains, like diagnosing particular diseases. Another way of restricting the domain was to create an artificial world, like blocks on a tabletop, and teach the software how to navigate it to achieve simple tasks, like stacking three blocks.

And the current boom is producing some successes. But this time around the investment is much greater, and nothing has yet been produced that promises to pay off that investment. Rather, the brittleness and other shortcomings of Large Language Models are becoming increasingly evident. And it’s becoming clearer that there are things the models can never do.

And as I’ve tried to indicate, what LLMs lack is exactly what world models offer. So it is not surprising that so many people think that bringing real-world knowledge to AI systems will be a big deal, even a bigger shift than LLMs. AIs that experience the world would be closer yet to GAI.

Current AI companies are hedging their bets against the collapse of funding for LLMs. Or more charitably, they are thinking ahead, working on other AI approaches. Hence the flurry of attention to world models, beginning to be seen as the next wave in AI.

These investors may be disappointed.

The Real World Is Hard

James Wang’s book What you Need to know about AI won this year’s American Legacy Award for Best Nonfiction. Wang says that world models are really, really hard. LLMs had it easy: they were able to scale the way they did because they were fed the entire internet as their training set, at a cost of essentially nothing. (“Essentially.”) All that data was just sitting there to be harvested.

World models don’t have it so easy. If they have to train on physical-world data, they will run into some tough problems.

“Physical-world data is different. Someone has to deliberately record it. A sensor has to measure it. Analog information must be transformed into digital form. Measurement introduces error. Infrastructure costs money. And the data itself is vertical — a dataset of warehouse logistics tells you nothing about kitchen robotics.”

It’s not that the data can’t be collected. It’s just that it’s really expensive. And the verticality issue means that the investment in kitchen logistics will only pay off in the kitchen robotics market. (That’s oversimplified, but still meaningful, I think.)

There are already successes in robotics, especially in factory automation. Wang: “Traditional automation works brilliantly in standardized, controlled environments. A car assembly line is designed so every part arrives in the same orientation at the same time. The robot just executes a pre-programmed sequence.”

But that’s not how world models would to approach robotics. A world model factory robot would approach automation tasks the way humans do: with an understanding of physics and object permanence and gravity and momentum. A world model factory robot would be able to handle new tasks and changes in its environment.

The setbacks in autonomous vehicles should be a warning. The task would be easy if you only had to deal with highways and traffic lights. But to deal with the kid running into the street you need to know something about kids. We’re nowhere near solving that problem.

The strongest argument for near-term success in world models, Wang says, is those vertical domains where the payoff justifies the investment. “Surgery. Semiconductor manufacturing. Warehouse logistics. You don’t need to solve physics in general. You just need to solve your physics.”

That’s not a new insight. It’s what directed Expert Systems work in the 1980s. It’s a good idea, but even going vertical is very expensive.

A general world model, which is what you’d need to claim you’re approaching GAI, that would be far more difficult and expensive. Wang says, “the fact that this is so difficult even in narrow cases should inform you how monumental a totally general-purpose world model is.”

As the limitations and detrimental features of LLMs become more consequential, investors will become more skittish, and they will look to the next thing. Well, they are already. But if world models are the next thing, they will likely take decades to deliver. And the LLM boom may end before that.

If so, another AI Winter is coming.

Computer History

The End

The Pertec people managed to alienate virtually all key MITS personnel. “They kept patting us on the head, saying we didn’t understand the business,” Roberts recalled. The MITS regulars didn’t respond well to the Pertec management teams. The standard line on them was that they were “two-bit managers in three-piece suits.” The epithet was used so frequently it was shortened to simply “the suits.”

Pertec treated MITS as if it were a big business in an established industry. Before agreeing to buy MITS, Pertec executives asked Roberts to show them his five-year marketing forecast. At the time, MITS advance planning “consisted of where things would be on Friday,” Roberts said. To please the buyers, Roberts and Eddie Curry invented projections they figured would make the Pertec managers break out the champagne. They told Pertec that sales would double each year and provided a pie-in-the-sky guess of how many machines the company could move. Pertec bought it all.

Over the following year, managers came and went at Pertec in extraordinary numbers. “People based their careers on trying to live up to that [forecast],” said Curry. Mark Chamberlain had no use for the Pertec suits who’d invaded MITS: “They sent in team after team. Each team came in to knock off the previous team. Any given team had about 60 to 90 days to turn the mess into something good, but it wasn’t enough time. It was just long enough for the people to come in and switch from a position of trying to understand the problem to becoming a part of the problem. After 60 to 90 days, you were definitely part of the problem. And they’d send in the next guy to fire you.” Chamberlain left to go to work for Roberts in his lab. “I wanted out of that Pertec thing like right away,” he said. “That thing was crazy.” For a while, Chamberlain worked with Roberts on a low-priced computer based on the Zilog Z80 chip, but he soon left to pursue other opportunities.

Others were defecting from Pertec’s MITS group. Bunnell departed at the end of 1976 to start Personal Computing, one of many significant personal-computing magazines he would eventually launch. He published it from Albuquerque throughout 1977 with contributions from Gates and Allen. Andrea Lewis took over as editor of Computer Notes and changed it from a company-written newsletter to a slick magazine with outside contributions. Eventually she accepted an invitation from Paul Allen to move to Bellevue and take over Microsoft’s documentation department. Sometime after that, Chamberlain also joined Microsoft.

Several engineering people left Pertec to work for a local electronics company. Even Ed Roberts, after five months, became fed up with Pertec. “They told me I didn’t understand the market. I don’t think they understood it.” Roberts bought a farm in Georgia and told everyone he intended to become a gentleman farmer or go to medical school. Eventually he did both, with the same concentrated energy he had brought to MITS.

Pertec gradually came to regard the MITS operation as a bad venture and abandoned it. According to Eddie Curry, who stayed on longer than any other MITS principal, Pertec continued making Altairs for about a year after the acquisition, but within two years MITS was gone.

It would be hard to overestimate the importance of MITS and the Altair to the existence and form of the personal-computer industry today. The company did more than create an industry. It introduced the first affordable personal computer and pioneered the concept of computer shows, computer retailing, computer-company magazines, users’ groups, software exchanges, and many hardware and software products. Without intending to, MITS made software piracy a widespread phenomenon. Started when microcomputers seemed wildly impractical, MITS pioneered what would eventually become a multibillion-dollar industry.

If MITS was, as writer David Bunnell’s ads proclaimed, number one in the business, the scramble to be number two was won by one of the most idiosyncratic of the early microcomputer companies…

From Fire in the Valley by Michael Swaine and Paul Freiberger, the seminal history of the magical time when garage-shop electronics hobbyists did an end run around the computer priesthood and created an industry and fomented a revolution.

“If you’re going to read one history book this decade, read this one. You need to know the hilarious saga of the wizards and the wing nuts and the little miracles by which they created everybody’s future.”

— John Perry Barlow. Pick up Fire in the Valley at pragprog.com.

You can (and should!) buy the current edition of Fire in the Valley from the Pragmatic Bookshelf in electronic form (PDF for desktop/tablets, epub for Apple Books and e-readers, and mobi for Kindle readers) here. Or you can get the unabridged audio book in mp3, m4b, and ogg formats here. Or even better, go for the honest-to-goodness hold-it-in-your-hands paper versionfrom Bookshop.org (United States only) here because you support independent bookstores, right? But ok, it’s on Amazon here too. Only what if you want to buy it from an independent bookstore somewhere other than the United States, you ask? No problem. You can find indie bookstores around the world here. I gotta tell you, though, the book is about 400 pages, so if you think you can just wait for me to excerpt it all here a paragraph or two at a time, you’ll be waiting about a century, and I don’t want you to have to go through that. Better just order it now, don’t you think?

Writing

Writing? What happened to “creative nonfiction”? The term “creative nonfiction” is both a loose designation for many kinds of writing, from memoir to long-form narrative reportage, and also a specific academic discipline in which you can get a degree, distinct from poetry, fiction, or drama. It was Lee Gutkind who fought to get the genre that academic respect. Here I use the term in the looser way, which means I can use it to describe most of the writing I’ve done in my career.

This part of the blog used to be titled “Creative Nonfiction,” but I’ve decided to give myself more scope. It may now include creative nonfiction, poetry, a recommendation of a book on writing, short fiction, or whatever this is:

Thoughts on Doing a Critique

This may be only useful to me. But if you ever find yourself in a writing workshop or if a friend asks you to critique something they wrote — well, here’s this:

Critiquing is not about whether you like the piece.

Critiquing is not about whether you think the piece is good or bad.

Critiquing is about offering helpful, actionable advice on the piece.

The second prerequisite for critiquing is immersion in the work.

The first prerequisite is understanding the writer’s intent.

The point of the critique is to help the work to achieve the writer’s intent.

Critiquing needs to be a dialog, but a particular kind of dialog.

Different writers want, and benefit from, different kinds of critiques.

You need to ask questions in order to discover the writer’s intent.

You need to ask questions to find out what help the writer wants.

You need to relate all this to decide what help the writer needs.

On your first reading of the piece, let yourself simply react. Feel it.

Then read again to understand why you felt the way you did.

Is it interesting? Does it flow? Does it achieve its purpose?

Critique your critique: Is it valid, is it helpful?

Is it focused on helping the work achieve the writer’s purpose?

Poetry

Three Limericks about Food

A hard-toking couple from Texas

Just opened a bed, bud, & breakfast

And said with a grin,

Come to the Baked Inn;

And we’ll party until they arrest us.

In London a toff held a luncheon

To which certain thugs brought a truncheon

And thumped on his head.

The toff firmly said,

“That’s the last time that I let that bunch in.”

I was tasting a medley of dishes

With a chef for Sicilian militias

Who turned to me slowly,

Said I’ll take the cannoli

But the knishes can sleep with the fishes.

And I’ve published a little book of my poetry. It’s a mixture: sonnets, villanelles, tall tales in verse, light verse, and yeah, a few limericks. I’ve whim-priced it at $0.99 on Apple Books. You are welcome to buy it if you are so inclined.

The Dusty Shelf

“For inclusion in our forthcoming book on English prose, we request your permission to quote a selection from your recent article in the Sunday Press. We intend to use it as an example of bad writing, and to describe each inelegant deviation from good practice in detail, concluding with a rewriting of the passage as it should have been written.”

That’s probably not how they phrased it, but I can’t guess how they managed to get so many public figures to allow their writing to be eviscerated.

The eviscerations, called “Examinations and Fair Copies,” are the delicious treats of The Reader over Your Shoulder by Robert Graves and Alan Hodges. In the first half of the book the authors enumerate their Principles of Clear Statement and their Graces of Prose. And in the second half they apply them without mercy to the works of scientists, novelists, politicians, and other public figures.

I have a lot of old books on writing, and many of them are outdated, overly prescriptive, inapplicable to online writing. But a number of them have virtues worth preserving, and some are gems deserving of rediscovery.

I think so highly of The Reader over Your Shoulder that I have two editions of the book: the original 1944 edition and the revised and abridged 1979 American edition. The abridging sadly removed critiques of writing by Daphne Du Maurier, Ernest Hemingway, Aldous Huxley, Julian Huxley, and Ezra Pound, among others.

Here is an example from the original edition of their criticism of Hemingway’s writing in For Whom the Bell Tolls:

“To translate Spanish conversations into 1936 Revolutionary Spain, Ernest Hemingway was entitled to use Pilgrim Father English, ‘thou wert’, or early nineteenth-century Pennsylvania-Quaker English, ‘if thee wishes’, or twentieth-century colloquial English, ‘I suppose I am, if you say so’ — but not all three mixed up together.”

And this:

“‘But calm thyself. What passes with thee?’ The Spanish phrase from which ‘What passes with thee?’ seems to be translated is ¿Que te pasa? But it has no antique ring. Its English equivalent is ‘What’s the matter with you?’.”

Yeah, que te pasa, Ernest?

I hope it’s clear that the criticism in The Reader over Your Shoulder has a very different intent from the critiques I was writing about above. Graves and Hodges aren’t trying to help Ernest Hemingway. They are trying to help other avoid his boo-boos.

Recommended Reading

Snopes

The gold standard of the fact-checkers.

Letter from an American

Heather Cox Richardson’s reflections on current events are always insightful.

The Marginalian

If I call Maria Popova’s blog insprational, you’ll get the wrong idea. But it is. Just go see for yourself.

Poetry Foundation

Explore poetry across eras, genres, and formats.

The Fine Art of Literary Fist-Fighting

by Lee Gutkind

How a Bunch of Rabble-Rousers, Outsiders, and Ne’er-do-wells Concocted Creative Nonfiction.

Future Tense

A Z Mackay write weekly essays on “how technology rewrites being human.”

Educating AI

Nick Potkalitsky is spending this year using his blog to ask the critical questions regarding AI’s role in education.

Mark Watson’s Artificial Intelligence Books and Blog

Mark is the author of 20+ books (mostly on AI) and has 50+ US patents. Here he blogs about technology and life, but he also provides links to read his books for free online.

Advanced Geekery

David Gewirtz regularly delivers the geekest tech stories, videos, and more.

Tales from the jar side

Ken Kousen’s blog is free, funny, eclectic, and geeky. He’s a Java expert, author of Mockito Made Clear, Help Your Boss Help You, Kotlin Cookbook, Modern Java Recipes, Making Java Groovy, and Gradle Recipes for Android.

Programming Leadership

I’ve known Marcus Blankenship for years and I know his leadership advice to be good in more than one sense. He is, as he puts it, “on a mission to create the next generation of human-centered leaders who support people in doing the best work of their lives.”

Rhubarb Patch

I had a rhubarb patch when I was very young. It was the one corner of the garden that Mom gave to me to maintain. I would take a salt shaker to the garden and pick a stalk of rhubarb and salt it and eat it. This is Ken Firestone’s garden of opinion and discussion.

Thanks for reading.